A collaboration between: Caltech, CERN, FNAL, UMichigan, UFlorida, and many others

Physicists at the LHC will break new ground at the high energy frontier when the accelerator and the experiments CMS and ATLAS begin operation in 2008, searching for the Higgs particles thought to be responsible for mass in the universe, for supersymmetry, and for other fundamentally new phenomena bearing on the nature of matter and spacetime. In order to fully exploit the potential wealth for scientific discoveries, a global-scale grid system has been developed that aims to harness the combined computational power and storage capacity of 11 major "Tier1" center and 120 "Tier2" centers sited at laboratories and universities throughout the world, in order to process, distributed and analyze unprecedented data volumes, rising from tens to 1000 petabytes over the coming years. This demonstration will preview the efficient use of long range high capacity networks which are at the heart of this system, using state of the art applications developed by Caltech and its partners for: high speed data transport where a single rack of low cost servers can match, if not overmatch all of the 10 Gigabit/sec wide area network links coming into SciNet at SC07; real-time distributed physics applications, grid and network monitoring systems; and Caltech's recently released EVO system for global-scale collaboration.

We will demonstrate a distributed analysis of LHC event data using multiple 10 Gbit/sec links across the US and to Europe, Latin America and Asia, The demonstration will use a client ROOT process that communicates with many distributed "ROOTlets", using the Clarens Grid Portal. The ROOTlets at Reno, Caltech, and on the TeraGrid will operate on local data, producing analysis result files of event data that will be automatically aggregated on the client at Reno. We will also operate a purpose-built ROOTlet cluster at Reno which will be used to demonstrate the scalability of this type of cluster-based event analysis.

Continental and transatlantic networking for the LHC currently involves more than a dozen 10 Gbps links. This number will approximately double by 2010. Saturation of 10 Gbps links storage to storage has already been demonstrated in a production-ready setting by Fermilab and some of the universities hosting Tier2 centers, notably Nebraska. In a recent demonstration with Internet2, these flows were switched using Fermilab and Caltech's "LambdaStation" software.

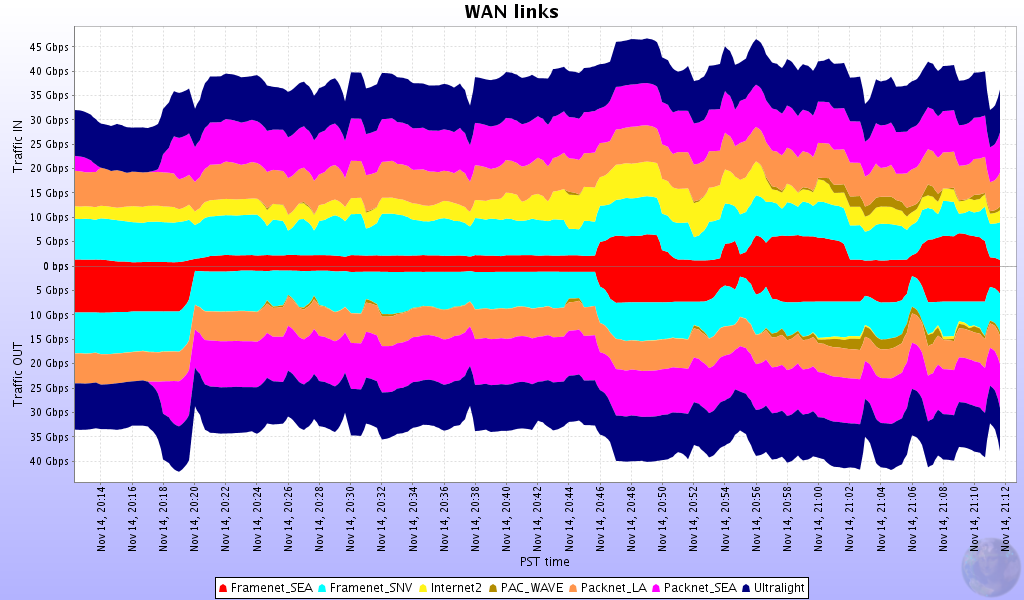

An open source Java application developed by Caltech (Fast Data Transfer, or FDT) permits stable reading and writing with TCP at disk read/write speeds over long distance networks for the first time. At SC07 Caltech will target an aggregate flow of 70Gbps or more from a single rack of low cost computational nodes using seven 10 Gbit/sec links to the Caltech HEP/CACR booth on the showfloor. Additional flows will use the links simultaneously in the reverse direction, in support of real-time data analysis. These data flows will be made up of disk <-> disk, disk <-> storage, and storage <-> storage transfers between high performance disk systems and larger dCache storage systems located at Reno, Caltech, University of Michigan, USP (Sao Paulo, Brazil), UERJ (Rio de Janeiro, Brazil), KISTI (Korea), Staright/Fermilab, CERN, and the University of Florida.

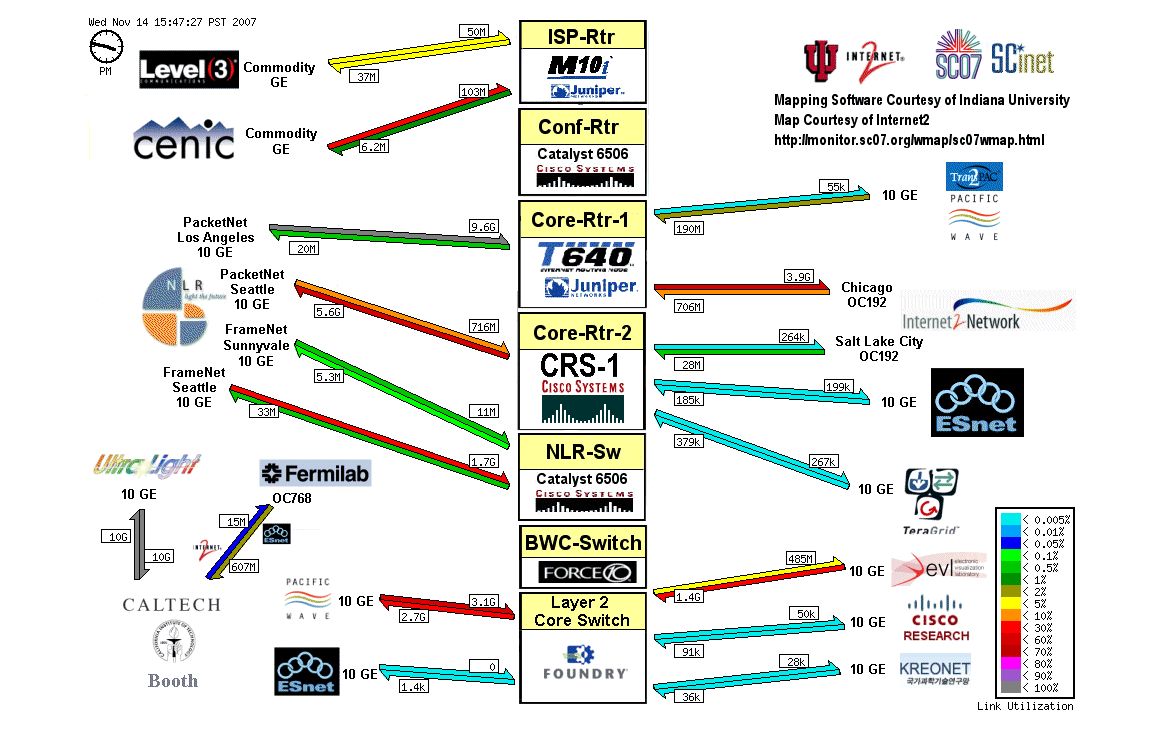

We will also preview the use of dynamic, adjustable network channels based on emerging standards (VCAT, LCAS), directing multi-Gigabit/sec data flows along network paths that are monitored and tracked end-to-end in real-time by Caltech's MonALISA system. The automatic provisioning, progress tracking, load balancing and channel adjustment overseen by autonomous agents could preview the way worldwide grid systems are operated and managed in the next decade.

See the Press Release: http://mr.caltech.edu/media/Press_Releases/PR13073.html

See the "official" page at: http://supercomputing.caltech.edu/

Photos from Michael Thomas: http://ultralight.caltech.edu/~wart/photos/sc2007/

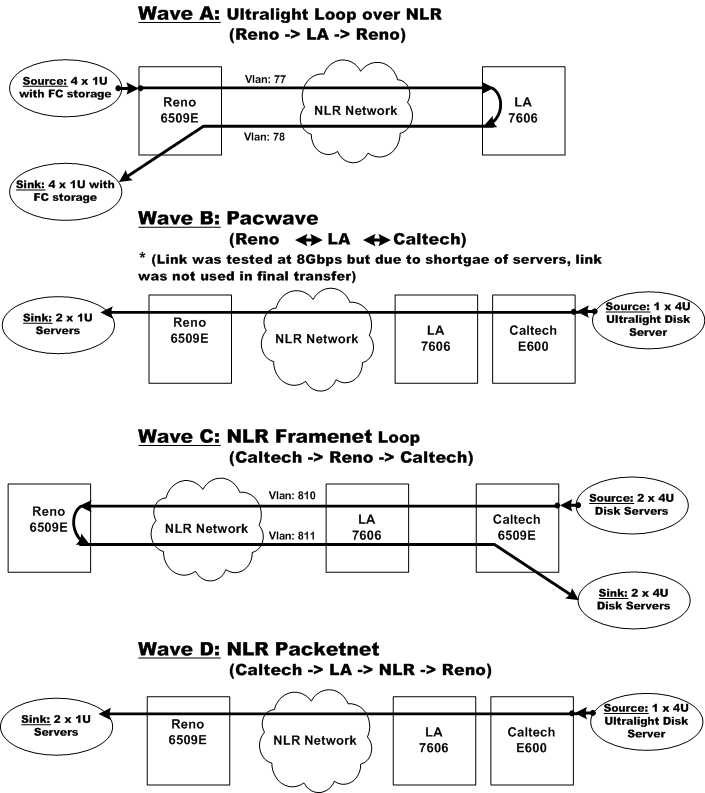

Flow diagrams from Azher Amin: