BANDWIDTH

LUST: Distributed Particle Physics Analysis using Ultra

high speed TCP ON the GRiD

In this demonstration we will show several components of a Grid-enabled distributed Analysis Environment (GAE[I]) being developed to search for the Higgs particles thought to be responsible for mass in the universe, and for other signs of new physics processes to be explored using CERN[II]'s Large Hadron Collider (LHC), SLAC[III]’s BaBar, and FNAL[IV]’s CDF and D0. We use simulated events resulting from proton-proton collisions at 14 Teraelectron Volts (TeV) as they would appear in the LHC’s Compact Muon Solenoid (CMS) experiment, which is now under construction and which will collect data starting in 2007.

The Grid is an ideal metaphor for the distributed computing

challenges posed by experiments at the LHC. Enormous data volumes (many Petabbytes,

or millions of Gigabytes) are expected to rapidly accumulate when the LHC begins

operating. Indeed, the existing experiments at SLAC and FNAL have already

accumulated more than a Petabbyte of data.

Processing all the data centrally at the host laboratory is neither feasible nor

desirable, and instead it must be distributed among collaborating institutes

worldwide, so enabling the massive aggregate capacities of those distributed

facilities to be brought to bear. The distribution of data between the host

laboratory and those institutes is not one-way: large quantities of simulation

data and analysis results need to return to the host laboratory and the other

institutes as demand dictates. Indeed, we plan for a highly dynamic, work-flow

orientated system that will operate under severe global resource constraints.

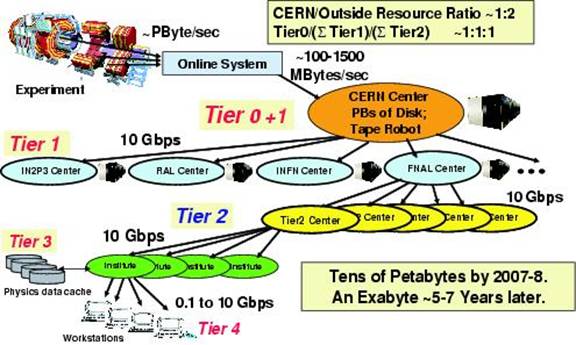

In our extensive systems modelling studies, it has been determined

that a hierarchical Data Grid is required.

CERN, SLAC and FNAL and the scientific Grid projects[V] in the

Our demonstration will show an example LHC physics analysis,

which makes use of several software and hardware components of the

next-generation Data Grid being developed to simultaneously support the work of

scientists resident in many world regions. Specifically, we will make use of a

Web services portal developed at the California Institute of Technology (Caltech[VII]) for scientific data and

services, called Clarens[VIII]. The Clarens dataserver

includes Grid-based authentication and services for a range of clients that

include server-class systems, through personal desktops, laptops, to handheld

PDA devices.

In the demonstration, we will use an analysis tool called

ROOT[IX] as a Grid-authenticated

Clarens client. Physics event collections, aggregated into large disk-resident

files, will be replicated across wide area networks from Clarens servers

situated in California, Geneva, Illinois and Florida to a server at

SuperComputing 2003 in Phoenix, Arizona. The replication procedure will involve

using ultra-high-speed variants of TCP/IP[X] that have been developed in the FAST project

at Caltech. (Our team currently holds the Internet2 land speed records, which

were set using these Ultraprotocols[XI]) As the event collection replicas arrive

across the Wide Area Network at the SC2003 server, the ROOT client will

dynamically generate and update mass and other spectrograms, a typical physics

analysis task when searching for new physics processes. We intend to achieve

data replication and analysis rates of at least a GigabByte/sec

during the demonstration.

In a variant of this demonstration, we will show a Pocket PC PDA device (an authenticated handheld client connected wirelessly to the Grid), running a small footprint Java-based analysis tool, in communication with the Clarens servers, capable of generating histograms and other representations of the physics data in response to data selections made by the user.

References